We are all familiar with the acronym GIGO (Garbage-in-garbage-out). It applies to various phases of the Market Research process, including Audio-recording of data. Simplistically put – the better the quality of audio-recording of any interaction, the better its transcript.

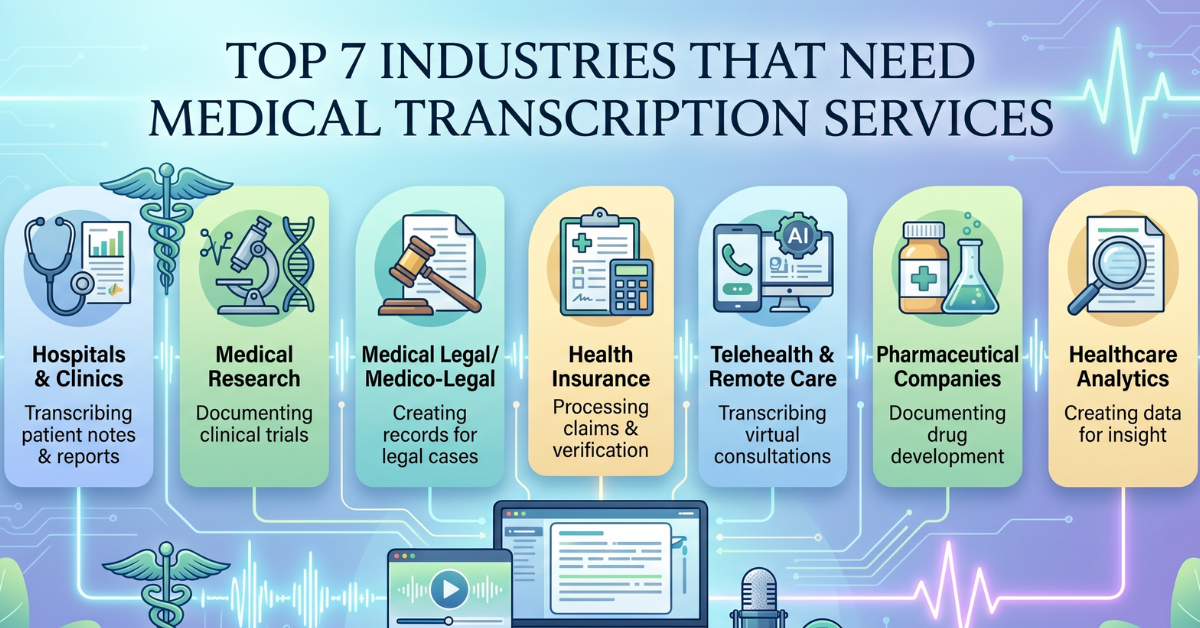

Various sectors use audio-recording for data-collection, for various purposes… be it documentation or further analysis. For instance; Healthcare sector could require transcription of Physician notes, Legal-related businesses for transcription of depositions, Media sector for subtitling, Market Research for transcription of FGDs/ in-depth interviews with respondents.

The repercussions of poor audio-quality can run wide and deep. For instance, in the Legal/ Healthcare context – inaccurate transcription could majorly affect outcomes of litigation or investigation into medical misconduct. Similarly, an inaccurate FGD transcript could result in poor quality analysis and reporting.

Audio-quality often determines transcribers’ grasp of words, emotions, engagement of respondents during interviews. Indirectly therefore, it impacts the analyst’s understanding of what was said and how it was expressed. Taken further, such miscomprehension could make insight-generation a challenging task.

Transcripts often provide ‘evidence’ of insights drawn by analyst. Audio notes and respondent verbatims can provide credibility and depth, when calling out an insight during reporting stage. Good audio quality ensures full capture of raw data, leading to more detailed and accurate reporting.

Simplistically put, the more accurate the transcription, the meatier the insight-generation and reporting.

Thanks to the availability of technological advances like Speech Recognition and Speech-to-text AI in Research Tech (ResTech) products; enhanced audio-quality is now within reach for Market Research industry. Typically, audio data goes through three stages from the time it is captured to the time it is analysed:

- Audio-data capture

- Audio-data cleaning/ restoration

- Transcription of audio-data, be it Manual or Tech-enabled.

Researchers have most control over audio-data, at the capture stage. Data capture might be done online or offline. We have observed most online data capture to be of good quality. It is the offline

data capture where there is a lot of improvement scope. And This is where 5 simple hacks can work wonders, at the data-capture stage:

- Mic audit before the focus group/ interview starts: This helps ascertain whether the device being used for recording is primed to capture good quality of audio. Checking mic input settings on the recording device helps identify whether the mic’s volume settings are appropriate for data capture.

- Invest in video and audio recording devices, instead of relying on mobile phones for recording. While mobile phone recorders are a handy option; at best, they should serve as a back-up, rather than being the main recording device.

- Separate mic for each speaker Particularly with FGDs, there is a risk of leaving out respondents seated away from the mic. Also, considering there are multiple people seated together, the chances of capturing ambient noises are higher. Thus, it is advisable to provide for multiple mics, placed to cover all voices participating in the discussion.

- Check for echo: The size of a room plays a role in the recording quality of audio-data. Using collar mics helps avoid echo interfering with transcription.

- Minimisation of ambient indoor/ outdoor sounds: There are times when everyday noises eg. air-conditioner humming, roadside traffic sounds, voices other than interviewer and respondent; could interfere with the recording quality. Not all such interference can be predicted and/ or eliminated, all the time. Yet, the objective is to be watchful of these and minimize them as and when they arise.

- Tackling cross-talking: Asking respondents to speak one-by-one could dilute group dynamics. An effective way to allow free-flowing expression of opinions is to use individual mics/ headsets for every speaker. Certain platforms also allow the host to adjust audio settings of respondents. This helps ensure good quality of data-capture, despite cross-talk.

When it comes to data cleaning/ restoration/ transcription, at MyTranscriptionPlace, we now offer various workarounds, to address the dual challenges of timely and accurate transcription of audio data.

- Limit-free volume amplification technology to enhance audio quality, to make distorted/ echoing/ polluted voice recordings sound clear, crisp, professionally recorded

- Speech recognition technology that enables us to automate the transcription process, so as to handle transcription volumes in a shorter period of time.

- AI helps us provide time-stamped, interactive transcripts for transcribers to edit and bring closer to accuracy.

- Peer-checking by another native transcriber, with an added layer of proof-checking by experienced Quality Managers.

All in all, audio-quality is best controlled at data-capture stage. At later stages, it is often possible to enhance audio-quality and arrive at transcripts that help deliver credible insights and bring them to life.

Try our Free Audio Enhancer